I’ve been thinking a lot lately, about application architecture. Generally this is the part where an infrastructure guy starts to have smoke pour out of his ears as his brain begins to fry, but in this case there is a method to my madness. For the past few years, under the banners of three different employers, I have been working to help my infrastructure customers move along the path “up the stack”. To survive, IT must become more agile, infrastructure must start to be deployed via a true service delivery model, and the folks who run that infrastructure must start to function more as business analysts than as folks who simply keep the gears turning (less Scotty, more Spock).

The thing is, where are application architects and developers in all of this? In my opinion, a big failure of x86 virtualization is the way it was ultimately implemented. Legacy process was dragged forward into the new environment. Physical sprawl became virtual sprawl and newly formed “virtualization groups” got a seat at the table alongside all of the other warring silos. These walls are all starting to come down now as a result of IT being faced with almost insurmountable pressure by the business to transform. “Hybrid cloud” is the buzzword and what it really means is sophisticated management and orchestration layered on top of virtualizaton enabled converged infrastructure and enhanced by seamlessly integrated public services. Applications and application stacks, however, continue to look a lot like how they always have. When all of the white boarding ends, and all of the esoteric discussion about “workflow orchestration” and “service offerings” have come to a conclusion, and the time has come to implement, we are left with the task of defining what is a service offering? What does it look like? As it turns out, 9 times out of 10 a service offering is an N tier application which, of course, means it is a collection of very legacy servers. All of this begins to feel very archaic when you discuss layering it on top of your shiny new elastic computing utility powered by intelligent workflow orchestration!

It might seem obvious that the point of PaaS is to address this seeming (and growing) gap in maturity between how we design applications and how we are planning to deliver them, but in my opinion PaaS misses the mark as well. PaaS, as it exists today, simply takes managed code and abstracts its infrastructure foundations to varying degrees. In some cases there are some minor tweaks to traditional application architectures (managed data services taking the place of traditional data sets in some cases), but in essence the only difference between IaaS, PaaS and SaaS is in how the server foundation is managed.

In trying to puzzle through how applications might evolve to truly match the agility inherent in modern cloud infrastructure foundations, it struck me that the model already exists in an unlikely corner of enterprise computing – HPC. In the High Performance Computing model, super complex problems are broken down into small units of work and centrally coordinated. Results are then aggregated. HPC clusters grow to thousands of nodes as needed since they are designed to tackle highly computationally intensive tasks. At first glance, this doesn’t appear to have much in common with, for example, a traditional public facing website. Dig a bit beneath the service, however, and there are powerful lessons to be learned here similar to the way a F18A design might influence a passenger airliner.

The goal of any application is to accomplish work. Take any application architecture and it can be broken down into constituent functional parts that can further be associated with capabilities. Consider this simple diagram:

Any application can be broken down into three core functional areas: interaction with some outside entity (a user or another process), processing of information, and storing and fetching of results. In a typical N tier application we define these areas as the web, app and data tiers. Generally, we assign these roles by layering specific software on top of general purpose operating systems. In the virtual world, we insert a layer of abstraction between these operating systems and the hardware:

When we consider popular cloud models, be it IaaS, SaaS or even PaaS, what we are really talking about is adding additional layers of automation, and abstraction, above and around the core infrastructure in order to manage the application stack, and application lifecycle, from a service oriented rather than server oriented standpoint. There is no hiding the fact, however, that the entire well orchestrated house is still built on the same basic foundation that applications have been built on since the 90s in the x86 world – lots and lots of Windows and/or Linux servers:

So on the one side of the solution architecture equation we have application functionality and requirements, the design of which we can see hasn’t changed much at all. On the other side of the equation lies the resources which applications consume. These can be broadly broken down into three big ones:

- Compute – very simply computational capability. Can be general purpose (CPU or GPGPU) or task specific (DSP, traditional GPU, etc)

- I/O – transport, external interface, connectivity. Essentially, any system needs to connect to some form of network, needs to connect to its storage, needs interfaces to connect with the outside systems

- Storage – data needs a place to go. either temporarily (in memory), for longer periods (on and near line storage) or potentially forever (long term and archival storage)

Hypervisors have done a pretty good job of abstracting some of the complexity of the above bits from both the operating system and the applications which reside on them. The importance of this piece isn’t to be underestimated. It wasn’t long ago, in the x86 world, where each of the above areas need to be considered, from a physical impact point of view as part of any application architecture design discussion. Today, the resourcing and performance characteristics discussions between the folks who provide infrastructure and the folks who design applications have shifted to a capabilities and capacity discussion rather than a chat about physical kit. Today, some of the key metrics which drive system design are:

- IOPs requirement

- megacycles consumed

- bytes of RAM consumed

- throughput requirements for data in flight

- capacity requirements for data at rest

Looking at the above list, nothing really screams “Linux” or “Windows”, does it? This is because virtualization has abstracted hardware, but there has been no abstraction of software and control. Application architects no longer live in a world where they need to worry so much about the brand and size of a physical storage array, application server or network switch, but they do still very much live in a world of databases, web servers, operating systems, middleware, and custom application code. As the drive for efficiency at the core infrastructure layer continues, and infrastructure architects advance the maturity stage of their systems and move their own focus up the stack, I believe this incongruity will become more apparent. Something along the lines of “gee Mr or Mrs CIO, I would love to deliver more resource efficiency from our hardware or cloud service provider, but unfortunately we have all of these (relatively) bloated copies of Windows and Linux that we need to run in order to get anything done”

Consider that inefficiency. On top of any pile of hardware today, on premises or in a cloud service providers XaaS, you have a thoroughly efficient operating system in the form of the hypervisor. This hypervisor OS is perfectly capable of instantiating any bit of code you might like. So far, what we are choosing to instantiate on these clever hypervisors are big blocks of code that have their roots in the paradigm of the physical world in order to run app stacks that are an evolutionary extension of the way things have been done since the client server era. What if we did something different?

What I am proposing here is a framework whereby traditional application design assumptions are thrown out. Rather than building ground up, selecting technologies from buckets and then building functionality that matches these predefined buckets, I am suggesting to build applications top down. The following components would be required:

- a workflow design engine – the workflow designer would provide a facility for expressing a business problem in terms of atomic units of work. A further facility would be provided associating these units of work with a set of resource requirements and characteristics. Each “unit of work” would then have a block of attached managed code.

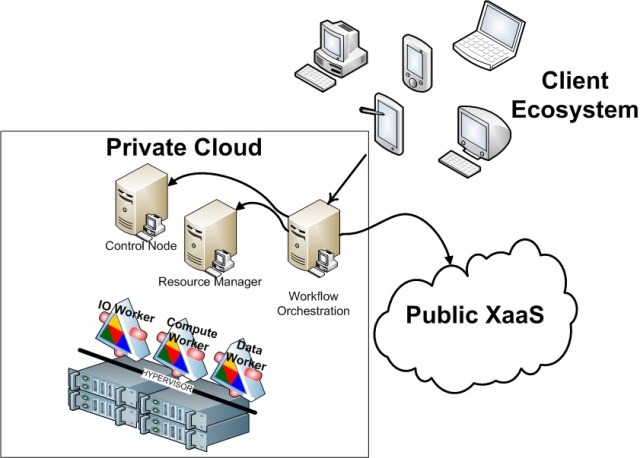

- a control node – the control node would be responsible for consuming, interpreting and assigning units of work. The control plane would be able to understand that it has X units of computationally intensive Java code that need to execute, Y units of data access .NET code, Z units of basic PHP code, etc. It would then facilitate a resource request to satisfy these requirements.

- resource manager – the resource manager node would act as a bridge between the control node and the infrastructure workflow orchestration system. The job of the resource manager would be to map resource requirements to available capacity, understand and track existing capacity, generate alerts and trigger provisioning events via workflow orchestration

- workflow orchestrator – the workflow orchestrator would function much as they do today. Instead of heavyweight provisioning events of full operating systems and software stacks, they would be provisioning lightweight workers

- worker nodes – these nodes would be the key to the transformation. running directly on the hypervisor, these nodes would be designed to provide the smallest atomic unit of capability possible to satisfy typical application requests. a computationally intensive workstream might result in a worker node being spun up on GPGPU hardware with the proper interfaces in place to run the type of code being requested as efficiently as possible. Similarly, an IO intensive workstream would be spun up on a physical foundation with 10Gbps attached IO.

Clearly the devil would be in the details with this approach, but I do think that the future of application architecture must move along a path similar to what is outlined above in order to properly exploit the potential that is slowly being unlocked by the shift from traditional infrastructure foundations to a hybrid IaaS model.