Part the First: Introduction

Momentum in the cloud space continues to accelerate. Trends that have been clearly indicated for a few years now are starting to evolve rapidly. In short, IT is dead, all hail Shadow IT. Dramatic, but perhaps not really accurate. The reality is we are solidly in the midst of a period of creative destruction. This isn’t the shift from physical to virtual, it’s the shift from Mainframe to distributed systems. What is the proof of this? There are two indicators that mark a period of creative destruction in technology: the emergence of dramatically new design patterns and a shift in sphere of control. The latter is clear. Core IT, which has always struggled in its relationship with the business it serves, is clearly now ceding authority to architects, analysts and developers in the business lines who are tied closely to profit centers and the value of whose work can be clearly articulated to the CEO. This is a shift that has been brewing for quite some time, but the emergence of viable public cloud platforms has finally enabled it. The former, the shift in design patterns, is flowing directly from this shift to a more developer centric IT power structure. In contrast to the shift from physical virtual, which saw almost no evolution in terms of how applications were built and managed (primarily because it was core IT infrastructure folks who drove the change), the shift from virtual to cloud is bringing revolutionary change. And if there is any doubt that this is vital change, just step back from technology for a moment and consider this… What business wouldn’t want a continually right sized, technology footprint, located where you need it when you need it, which yields high value data while serving customers, all for a cost that scales linearly with usage? That is the promise that is already being delivered in public cloud by those who have mastered it. The rub though, is in mastering it. Programmatic control and management of infrastructure (devops) isn’t easy and the tools aren’t quite there yet for developers to be able to not worry about it.

Part the Second: Historical Context

Before we get to where things are headed, it’s worth revisiting how we got here. Taking a look back at history, “cloud” became a meaningful trend by delivering top down value. The first flavors of managed service which caused the term to catch on were “Software as a Service” and “Platform as a Service”, both of which are application first approaches that disintermediate core IT. SaaS obviously brings packaged application functionality directly to the end user who consumes it with minimal IT intervention. Salesforce is the great example here, causing huge disruption in a space as complex as CRM by simply giving sales folks the tools they needed to do their job with a billing model they could understand and sell internally to the business.

PaaS sits at the other side of the spectrum and was about giving developers the ability to build and deploy applications without caring about pesky infrastructure components like servers and storage. Google AppEngine and Microsoft Azure (and a strong entry from Salesforce in Force) blazed the trail here. Ironically, though, most developers weren’t quite ready to consume technology in this way, the shift in design thinking hadn’t occurred yet, and the platforms had some maturation to do (initial releases were too limiting for current design approaches while not bringing any fully realized alternative). It was at this point that Amazon entered the market with S3 and EC2 (basically storage and virtual machines as a service), Infrastructure as a Service was born, in turn giving birth to confusing things like “hybrid cloud” and, as early players like Google and Microsoft pivoted to also provide IaaS, it looked like maybe cloud would be more evolution than revolution.

Part the Third: Shifting Patterns

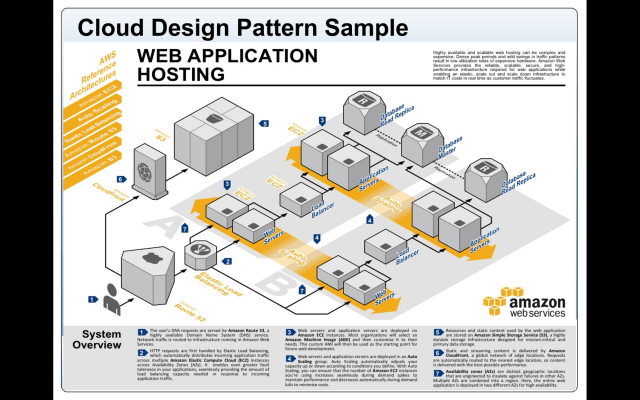

Looking deeper though, it’s clear that commodity IaaS is just a stop gap. Even the AWS IaaS portfolio reveals all sorts of services, both vertically and horizontally, that are well beyond commodity infrastructure. While the decade long shift from physical to virtual brought no real change in how servers were deployed and managed, or applications were built, instead just adapting existing processes and patterns to a faster deployment model, the shift to cloud has already brought revolutionary change in 3 years when it comes to design patterns and how resources are managed and allocated. The best way to understand this is to consider how cloud design patterns compare to legacy design patterns. The AWS approach is a bit of a bridge between past and future here and so provides a very easy to understand example:

A typical 3 tier app is illustrated above. Web, app, data, scale out, scale up; simple stuff. Even in the AWS example though, which maps very closely to a traditional infrastructure approach by design, there are some dramatic differences. The A and B zones in the diagram represent AWS availability zone diversity. What this means is physically discrete data centers. Now note that load balancing, a fairly straight forward capability, is spanning these availability zones. Driving down a layer, we see traditional server entities, so the atomic units of the service are still Linux or Windows VMs, but note that they are not only “autoscaling “, but are autoscaling across the physically discrete datacenters. The implications of this architecture is that this application actually consists of n Web and App nodes across two physical locations with dynamically managed access and deployment. Moving to the data tier, we can see similar disruption. Rather than a standard database instance, we can see a data service scaling horizontally across the physical locations. Finally, unstructured data assets aren’t sitting in a storage array, but rather are sitting in a globally distributed object store.

On prem, much of this is very difficult (geographic diversity, autoscaling, giant object store) and some of it is impossible (capacity on demand, database run as a service). For the N-tier app use case there is no immediate impact to the design pattern (hence Amazons mindshare success in legacy apps), but the implications of the constructs are clear. If you can dynamically scale infrastructure globally on demand, and maintain service availability, there is no need to limit your architectures based on the operational limits of traditional infrastructure. This is where cloud design patterns, and the notion of “design for failure” (vs legacy design approach where you assume iron clad fault tolerant infrastructure) were born. At this stage none of this is theoretical, nor is it just a Netflix or NASA game. Even traditional enterprises have real solutions in production. How do we operationalize all of this though? That’s been the really hard part so far. If there is one advantage to traditional infrastructure, it was that there is deep tribal knowledge and mature tooling to manage it. Cloud platforms really are infrastructure as code and expect management via API. Old tools haven’t caught up, or no longer apply, and new tools have steep learning curves or require moderate development skill. This is why we have seen the rise of devops and the explosion in interest for platforms like Chef, Puppet, Mulesoft, etc. It’s a tough problem though because ultimately devs don’t won’t to inherite ops (this would count as a huge cloud downside for them), and it’s not clear that ops folks can reskill quickly enough, or at all, to transition. In short, there is currently a vacuum and most folks are betting that the space will hash out quickly and that the tools will evolve before there is a real need to solve this from the customer side. Personally I see most IT shops investing very cautiously here and “buying operate”, even as they shift real production to cloud, until the directional signals are more clear.

Part the Fourth: The Topic at Hand

So what are the directional signals? Consider; why should we limit ourselves to the constructs of legacy infrastructure if the inherent flexibility of the service can free us from them? The answer here is that we shouldn’t and the directional signals are proving this. So what are these technolgies headed, what do they mean, and why? I’ve chosen a few of the big ones to explore. Before we get into specifics let’s spend some time thinking about what is really required to get to “self operating infrastructure” and what might be missing from the architecture presented above.

The Trinity

Where the proverbial rubber meets the road are of course are basic resource units. We need bytes of RAM to hold data and code being currently executed, we need bytes of long term storage to hold them at rest, we need compute cycles to process them, and we need network connectivity to move them in and out. Compute, network and storage: these abstracts remain the holy trinity and are so fundamental that nothing really changes here. Today, access to these resources is gated either by a legacy operating system (for compute, network, and local storage) or an API to a service (for long term storage options). Unfortunately, the legacy OS is a pretty inefficient thing at scale.

A Question of Scale…

Up until very recently, traditionally operating systems really only scaled vertically (meaning you build a bigger box). Scaling horizontally, through some form of “clustering” was either limited to low scale (64 servers let’s say) and “high availability” (moving largely clueless apps around if servers died), or high scale by way of application resiliency which could scale despite clueless base operating systems (Web being the classic example here). This kind of OS agnostic scaling depends on lots of clever design decisions and geometry and is a fair bit of work for devs. In addition to these models, there were some more robust niche cases. Some application specific technologies were purpose built to combine both approaches (example being Oracles “all active shared everything” Real Application Clustering). And finally the most interesting approaches were found in the niches where folks were already dealing with inherently massive scale problems. This is where we find High Performance Computing and distributed processing schedulers and also, in the data space, massive data analytics frameworks like Hadoop.

Breaking down the problem domain, we find that in tackling scale, we need some intelligence that both allocates and tracks the usage of resources, accepts, prioritizes and schedules jobs against those resources, stores, manages and persists data, and provides operational workflow constructs and interfaces for managing the entire system. There is no “one stop shopping” here. Even the fully realized use cases above are a patchwork tapestry of layered solutions.

…and Resource Efficiency

Not only are the traditional OS platforms lacking in native horizontal scaling capabilities, but getting back to our resource trinity, they aren’t particularly efficient at resource management within a single instance. Both Linux and Windows tend to depend on the mythical “well behaved app” and are limited in their ability to maximize utilization of physical resources (hence why virtualization had such a long run – it puts smarter scheduling intelligence between the OS and the hardware). But how about inside that OS? Or how about eliminating the OS all together? This brings us nicely to a quick refresher on containers. The point of any container technology (Docker, Heroku, Cloud Foundry, etc) is to partition up the OS itself. Bringing back a useful illustration contrasting containerized IaaS to Beanstalk from the container entry, what you get is an architecture that looks like this:

The hypervisor brokers physical resources to the guest OS, but within the guest OS, the container engine allocates the guest OS resources to apps. The developer targets the container construct as their platform and you get something forward from, but similar to, a JVM or CLR.

There is still a fundamental platform management question here though. We now have the potential for some great granular resource control and efficiency, and if we can eventually eliminate some of these layers a huge leap forward in both hardware utilization and developer freedom, but we really don’t have any overarching control system for all of this. And now we’ve found the eye of the storm.

A War of Controllers

Standing in between the developer and their user, given everything discussed above, remains an ocean of complexity. There is huge promise and previously unheard of agility to be had, but the deployment challenge is daunting. Adding containers into the mix actually increases the complexity because it adds another layer to manage and deploy. One way to go is to be brilliant at devops and write lots of smart control code. Netflix does this. Google does this to run their services, as do Microsoft and Facebook. Outside of the PaaS offerings though, you only realize the benefit as a side effect when you consume infrastructure services. That’s changing however. There is pressure from the outside coming from some large open source initiatives and this is causing an increased level of sharing and, quite likely ultimately some level of convergence. For now, the initiatives can be somewhat divided into top down and bottom up.

The View from the Top

Top down we’re seeing the continuing evolution of cloud scale technologies focused specifically on code or data. Googles Map Reduce, which became Hadoop, is a great early example of this. Hadoop creates and manages clusters on top of Linux for the express purpose of running analytics code against datasets which have an analytics challenge that fits into the prescribed map/reduce approach (out of scope here, but great background reading). Other data centric frameworks are building on this. Of particular note is Spark, which expands the focused mission of Hadoop into a much broader, and potentially more powerful, general data clustering engine that can scale out clusters to process data for an extensible range of use cases (machine intelligence, streaming, etc).

On the code side, the challenge of placing containers has triggered lots of work. Google’s Kubernettes is a project which aims to manage the placement of containers not only into instances, but into clusters of instances. Similarly, Docker itself is expanding the native capabilities beyond single node with Swarm which seeks to expand the single node centric Docker API into a transparently multi-node API.

…Looking Up

Bottom up we find initiatives to drag the base OS itself kicking and screaming into the cloud era. Or, in the case of CoreOS, replace it entirely. Forked from Chrome, CoreOS asks the question “is traditional Linux still applocanle as the atomic unit at cloud scale?” I believe the answer is no, even if I’m not committing to betting that the answer is Core. In order to be a “Datacenter OS”, capabilities need to be there that go beyond Beowulf. I’m not sure if it’s there yet, but CoreOS does provide Fleet which is native capability for pushing components out to cluster nodes.

Taking a less scorched earth approach, Apache Mesos aims more at being the modern expression of Beowulf. More an extensible framework built on top of a set of base clustering capabilities for Linux, Mesos is extremely powerful at orchestrating infrastructure when the entire “Mesophere” is considered. For example Chronos is “Chron for Mesos” and provides cluster wide job scheduling. Marathon takes this a step farther and provides cluster wide service init. Incidentally Twitter achieves their scale through Mesos, lest anyone think this is all smoke and mirrors! And of course the logical question here might be “does Mesos run on CoreOS?” And the answer is YES, just to keep things confusing.

What you Don’t See!

As mentioned above, Google, Microsoft, Amazon and Facebook all have “secret”, or even “not so secret” (Facebook published their orchestration as OpenCompute) sauce to accomplish all, or some, of the above. Make no mistake… This space is the future of computing and there is a talent land grab happening.

And the Winner Is!

Um… sure! Honestly, this space is still hashing out. There is a lot of overlap and I do feel there will need to be a lot of consolidation. And ultimately, the promise of cloud is really just bringing all of this back to PaaS, but without the original PaaS limitations. If I’m a developer, or a data scientist, I want to write effective code and push it out to a platform that keeps it running well, at scale, without me knowing (or caring) about the details. I’m buying an SLA, not a container distribution system, as interesting as the plumbing may be!