Another area that causes a lot of confusion is the relationship between virtual CPUs (vCPU), physical CPUs (pCPU), “logical” CPUs (lCPU for lack of a better acronym), hyperthreading and how all of these things relate. First, I think it is important to establish some baseline definitions. Let’s leave virtualization out of it for now. Before the advent of multi-core processors, it was pretty easy to define physical CPU. You looked at a motherboard, counted the sockets, and that was how many physical CPUs a machine had. Today, thanks to big advancements in process technology, each socket really represents multiple physical CPUs. There has been an unfortunate trend to refer to these as “logical CPUs” which, in my opinion, is a really serious misnomer, and I will explain why. Consider this image:

That beauty is an AMD quad core minus ceramics/heat spreader. You can actually see, with the naked eye, that there are 4 physical processing cores there. They are left/right, top/bottom and are joined by dense interconnects. Consider this diagram:

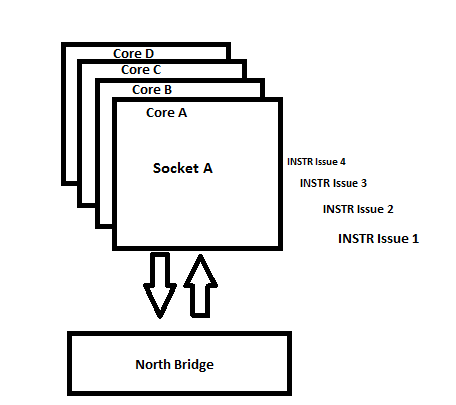

This is a traditional, two socket, multi-processing system. Notice that each CPU has independent connections to the “outside world” (lets leave aside the physical topology of these connections – whether they are shared or discrete – as this is a topic for another time), and each has instruction issues. This doesn’t mean that they need therapy, but rather that each CPU can be issued an instruction at the same time. There are two CPUs and each can be issued a total of one instruction at a time for a total of two “issues”. Now consider a multi-core design:

Again, we will leave aside bus topologies for now as it is not germane. Once again we have a connection to the outside world, and we have multiple issues (in this case 4). Each issue is assigned to a separate processing core. So we have the ability to issue four instructions at once, to four different processing cores. Each of these cores has an independent pipeline. That is the key differentiator here. For a processing core to be considered “physical” in my opinion, it must have an independent pipeline. What that means it that you give “Core 1” an instruction, it can carry that instruction out to completion without worrying about “Core 2”. This is simplified, but you get the idea.

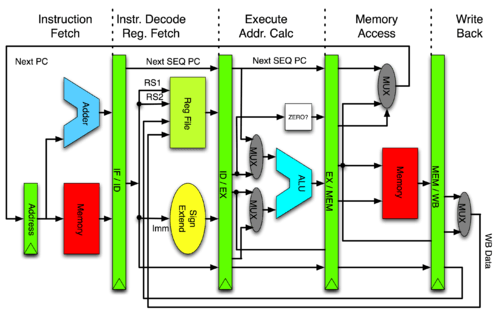

Now lets talk a bit about hyper threading and “logical CPUs”. To understand hyper threading, it is important to understand just how a CPU pipeline works. Consider this great diagram. I stole it from a discussion on the MIPS architecture on Wikipedia, but it doesn’t matter as the concepts apply universally (it is the implementation that differs):

As you can see, the process flow by with a processor executes a single instruction is actually quite complex. We have essentially four primary phases:

- Fetch – the processor reads the next instruction from the stack of instructions that is stored in memory and issues it

- Decode – the processor decodes the instruction, figuring out what to do with it. Incidentally, this is where the primary difference between “complex instruction sets” (CISC) and “reduced instruction sets” (RISC) originally came into play. On a traditionally CISC instruction set like x86, an “instruction” might actually be a “macro instruction” where a number of “micro operations” are actually embedded. A real world example would be “MOV” in assembler. MOV moves data. A “move” is actually a copy followed by a delete. In RISC instruction sets, tasks are broken down to smaller atomic units and more of them are executed. This is the reason RISC compilers (the software that turns high level programming in a language like BASIC or C into executable machine code) were more complex and RISC executables were often larger. /end trivia

- Execute – the processor next executes the instruction. In modern “super scalar” processors, there are multiple “execution units”. Super scalar came from the RISC world where the simple instructions allowed for simpler execution units that could be “scaled out” allowing for two instructions to be executed at once. Typical “execution units” are “integer units” (which handle integer math) and “floating point units” which handle, shocker, floating point math. With the advent of super-scalar architecture, this is where pipelining started to get fancy and a single CPU could work some magic like executing two instructions at once. Essentially, before an instruction was “finished” another one would be grabbed (issued) to be executed at the same time. Lots of conditions had to be met (primarily, an execution unit had to be free). To make this process more efficient and less dangerous, facilities were build around it. Modern CPUs can execute out of order (take an instruction a few down rather than the next one) and cherry pick these instructions based on which instructions can be executed together via algorithms like “branch prediction”. The logic here is that if the outcome of instruction A might impact what instruction B does, then they cannot be executed at once. If instruction C or D might be next executed (after B) depending on the outcome of A and B, the processor logical will pick either C or D to execute in parallel with A based on “branch prediction”. If it is wrong, it wasted time and has to do a do-over. If it is right, it gained some big efficiency (100%).

- Store – once the instruction has completed executing, the results are stored back in the data space of memory

The above can be hard to digest, but there is a ton of reference out there on the web which covers it at whatever level of detail you need. Everything from the physical signaling of all of these units (Intel and AMD architecture and engineering briefs) up to big blobby fuzzy pictures like I drew above (and often in color!). The main thing for understanding hyperthreading is to at least grasp the basics of what is outlined above.

With hyperthreading, Intel decided that it would be kind of neat if the super-scalar architecture were essentially extended to the OS. Allow the Operating Systems CPU scheduler to view the super-scalar nature of the processor as two actual CPUs. In practice, the efficiency of this design is hugely dependent on OS implementation, OS scheduler efficiency, and workload (big emphasis on workload), but either way, it is a neat trick. Consider:

In a hyperthreading enabled processor, for each physical CPU (be they cores within a multi-core CPU, or individual CPUs in a multi-socket system), two logical processors are presented to the Operating System. As you can seen above, each logical CPU effectively has an issue. What this really means is that a single CPU core is armed with two issues (in comparison to the prior diagrams where each physical CPU/core had a single issue). And so this is why I feel it is very important to use the terminology logical CPU only in the context of something like hyperthreading.

So far we haven’t discussed virtualization at all; and with good reason! The above is already pretty complex, but we are about to complicate matters further! In a virtualization system, what is essentially happening (and this is true regardless of whether it is client virtualization with a full host OS, slim hypervisor with a parent partition, or monolithic hypervisor like ESX/ESXi) is that a piece of software called a “virtual machine monitor”, in concert with hardware support these days (AMD-V, Intel VT) presents to a “guest” operating system what appears to be physical hardware to that guest operating system but what is, in fact, a software simulation.

The virtual machine monitor is a kind of multiplexer brokers access to physical resources from multiple clients. The physical resources are allocated into pools that are then virtualized. Physical resources are obviously finite, so some very careful scheduling and resource management is done by the virtual machine monitor in order for it to be effective (this is the “secret sauce” in virtualization). The two most important physical resources that must be monitored closely are processor time and RAM. Storage, network access and IO are also absolutely vital, but are a bit out of scope for this entry. RAM is as well, so let’s focus on processor time.

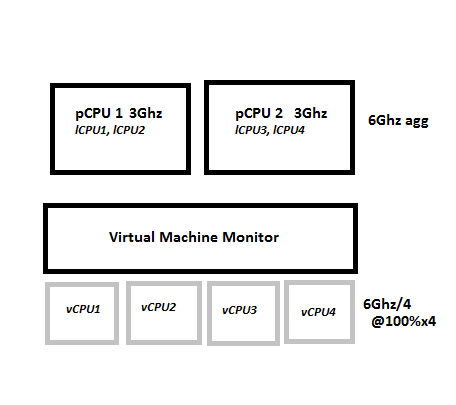

I say “processor time” instead of CPU for a very specific reason. We are now moving towards the definition of a “virtual CPU” and, in my opinion, attempting to create hard line mappings between “virtual CPUs” and “physical CPUs” has caused a lot of confusion for virtualization professionals and often hampers their ability to approach capacity planning the right way. Time for another diagram:

I have included clock speed in this diagram for a very specific reason. Considering the above, we can see that we have two physical 3Ghz processors (whether this is two single core CPUs or a single dual core doesn’t matter at this point). This gives us a total, physical, processing capacity of 6Ghz. It is important to view things this way because it is how the virtual machine monitor views them. The virtual machine monitor will represent that physical processing capacity to its guest operating system as some number of virtual CPUs which, at their essence, are scheduling time within the virtual machine monitor – virtual cycles which will then be mapped to a physical CPU schedule maintained by the virtual machine monitor.

VMware presents some really broad guidelines on vCPUs. On the one hand, you can create a maximum of 512, on the other hand, the recommended guidance is to not create too much more than 4 “per core” (or physical CPU) “based on workload”. At first pass, this seems overly broad, but there is good reason for it. If you can consider this in terms of processor time sharing rather than hard line mappings it becomes a lot more clear.

In the example above, we have chose to configure a total of 4 vCPUs in front of those two physical CPUs. What this means is that if each vCPU is running at 100% utilization, 100% of the time, it can effectively be the equivalent of a 1.5Ghz CPU. The reason for this is that the two physical CPUs have 6Ghz of processing capacity in aggregate. Realistically, however, it is very unlikely that any workload will be at 100% utilization 100% of the time. What is the processor utilization trend of a DNS server, for example? A DNS server might actually be able to get by just fine with a 200Mhz “CPU” with occasional spikes for zone transfers, boot storms, etc.

What this all boils down to is that planning “number of vCPUs to physical cores”, is really an exercise in deep resource utilization analysis on the projected workloads. This is often data that is absent when coming from a physical infrastructure where even heavily under utilizing workloads still consume a full physical server, but it is vital to ensuring that resources are being consumed in the most efficient manner which is the core value proposition of virtualization.

Great post – thanks Mark. I really struggle to explain this to customers, so it is really helpful for me. When designing virtualized architectures for Exchange hosting, I have to keep reminding customers that mailbox servers really do run at consistently high CPU utilization (in an environment where we are placing as many mailboxes per server as possible). They seem to think that they can put 4 mailbox VM’s on a single physical server and somehow get the same performance as 4 physical servers….

Great point. I am doing some Exchange virtualization work in a multi tenant environment now and it is challenging – esp when having to deal with E2k, 7 and 10 at once. The architecture has changed a ton over time!

It’s interesting that both ends of the bad assumption spectrum are common. I’ve seen the same you have, and also the opposite where a customer is reluctant to put more than a few commodity core infra servers on a nehalem core!

You had me until you petered out in the last paragraph. It seems we need a methodology. I am working on an approach but it’s at the aggregate level and to get down to something statistically accurate there is a lot of data gathering and data entry required.

Agreed on both counts :). Tricky space…