NVMe is probably one of the best upgrades PC users can do since the original move from HDD to SSD. Sure M.2 slots are bottlenecked by DMI, as are PCI-E slots off the chipset, but DMI is still 32Gb/s which is a lot more than 6 (SATA) and this increase in bandwidth really allows solid state technology to shine. The form factor is a benefit as well, slotting directly into the motherboard freeing up drive bays, using no cabling, and allowing that much more airflow. Of course most motherboards provide 2 of these magic slots and, where there are two disks, RAID 0 inevitably beckons!

Off the bat it should be stated that we’re solidly in the area of diminishing returns. Particularly in sequential read and write performance. With single NVMe parts able to push north of 3GB/s, there isn’t much headroom left. That said, it is something and there is also the fact that massive density still isn’t a thing in any solid state part, with 1TB being the realistic breakpoint now. Managing multiple drives can be a chore, so RAID 0 also brings a convenience aspect by way of volume aggregation that can be tempting. Plus, there is also the fact that if you can do something insane, you probably should!

Previous entries on the ComplaintsHQ gaming best covered the original NVMe load out (a 1TB Samsung EVO 960 and a 512GB Toshiba RD400, so no RAID 0 happening there) and then the later upgrade of the boot volume to Intel Optane 900P. With prices continuing to become more reasonable, and commoditization reaching the point where these things show up on sale at Best Buy, a recent impulse purchase resulted in this thing arriving at the HQ:

A much more suitable RAID 0 companion to the EVO 960, it seemed like the time was right to see what Intel RST could do with dual NVMe!

The install was the usual thing and not worth documenting, but one noteworthy point is that the miles of plastic cladding motherboards utilize today to both look cool and fill the case with glorious LED also result in a potential pain in the ass when needing to get at the M.2 slots since often one of them is buried under the cladding. In our case we needed to pull every card, including the obnoxiously large 2080Ti, in order to get under the hood.

Enabling RAID on the Z390 chipsets requires enabling the SATA controller (which had been left off in the gaming best), then enabling RAID mode for each M.2 slot, and finally configuring the RAID via the now accessible “Intel RST config” under Advanced Options in UEFI setup. Asus covers this well here:

Setup of the RAID was painless, particularly since this is a data drive (with Optane still being the best option for boot due to the insane random perf) with Windows 10 having all of the driver support needed to handle Intel chipset RAID.

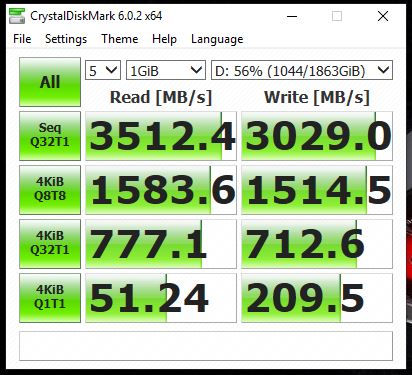

EDIT: Mystery solved! It seems post Spectre/Meltdown an update to Crystal Diskmark 6 is required. It’s not clear why this is from the release notes, but the results speak for themselves:

Before we get to the RAID testing, let’s have a look back at the perf of the individual parts. One critical thing that must be discussed first, and is a bit of an ongoing mystery at the HQ, is a precipitous loss of 4K random Q32T1 performance. The shots below are from benchmark runs back in June. At some point, something changed. Unfortunately we do not have updated screen caps, but take our word for it that at some point, 4K Q32T1 random perf (and only this perf tier) dropped roughly in half for all of these parts (Optane, Samsung NVMe, Toshiba NVMe), to around 380MB/s r/w. It’s not clear why this happened nor what is causing it. One suspicion is the Spectre/Meltdown fix, which does disproportionately hammer faster storage parts, but the timing doesn’t seem to align quite right and while some initial testing showed a similar horror show (Guru3D in particular), subsequent BIOS releases supposedly cleared it up. The gaming beasts Maximus X Code is on the latest UEFI bits, and there doesn’t seem to be a rage cacophony on the forums regarding small random perf on Asus, so we’re skeptical. We’ll continue to monitor the situation, and possibly at some point start with a clean slate install of Windows 10, but for now when comparing new benchmarks to the old, the 4k Q32T1 perf on the older shots should be viewed as about 2x what they are today.

OK with all of that controversy out of the way, let’s take a look at individual disk performance and then look at the RAID. First, the Optane, just as a reminder of the crazy 4k random:

Next up is the Samsung EVO 960:

And last but not least is the Toshiba RD400:

So how did the RAID 0 fare? Not huge gains, but overall solid and pushing right up against the DMI bottleneck:

- Seq Q32T1: R +10%, W +60%

- 4K Random Q32T1: R neutral, W +7%

- Seq Q1T1: R +50%, W +50%

- 4k Random Q1T1: roughly a wash vs the single disk perf

Overall it’s not a shock that RAID 0 is really shining in sequential. Particularly with a 1GB dataset that will be nicely chunked across the two parts. So is it worth it? Well the convenience is certainly great. And contrary to common fears, SSD durability has become excellent, exceeding HDD for any realistic consumer use case. Sequential perf can reach a point of diminishing returns, although the gains are clearly there. If the choice is between managing two drives to get the storage density you’re after, RAID 0 NVMe on modern chipsets is a good way to go. Two 1TB parts won’t really save any money, but individual NVMe parts max out at 2TB, so for NVMe based bulk storage, RAID starts to become very compelling.