Before wrapping up the vCloud Director installation and configuration, I wanted to take a break and document one of the related, critically important, core components of an overall software defined datacenter strategy: workflow orchestration. The ultimate goal of describing infrastructure in software is to gain agility and efficiency through orchestration. If networks, servers and even storage volumes are all code, then they should be easy to setup, teardown and move on demand. This leads to genuine economies of scale once applications adopt cloud design patterns and are built for elasticity and failure. Infrastructure on premise today, even virtual infrastructure, is largely static. Mobility is generally limited to high availability scenarios and shifting things around within a narrowly defined footprint to facilitate maintenance windows, migrations, and a limited amount of dynamic capacity efficiency (think vMotion, Storage vMotion, DRS, DPM etc). In addition, there are BCDR scenarios that have been made somewhat easier utilizing tools like SRM, but these are still complex to build out and really not dynamic.

Ultimately, next generation IT Service Management architectures would be built around a core of true automation and on top of a completely abstracted foundation. A separation between the physical and logical within the resource domain pillars. Software Defined Networks that separate the data from control planes and allow IP addresses to move freely between physical sites without disrupting applications, virtualized storage volumes that present a common face to the hypervisor despite being distributed across a wide geographic area, and capabilities within the hypervisor to dynamically create, destroy and move workloads across this abstracted foundation. And all of this should have centralized configuration and consumption via self service portals hooked directly into a service catalogue and some sort of configuration tracking system (a CMDB, central CMS, etc) An evolution in maturity of core architecture along these lines would have a cascade effect on support systems like traditional billing, provisioning, NMS and trouble ticketing systems by making them more efficient and allowing for much more accurate insight into the current state and health of the infrastructure; a boon for security and GRC (global risk and compliance).

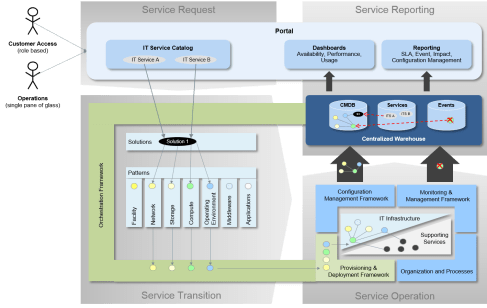

There is a lot of great thought going into this topic across the industry, and there are some resources on the web I have found particularly useful. I most enjoy the agnostic viewpoints, decoupled from specific implementation detail (because honestly this space is still anyones game). One blog I found fantastic was Felix Bodmer’s. Felix writes extensively on this topic and has some excellent insights and vision. He did what I think is a perfect job of diagramming an example of a next generation ITSM so, rather than reinvent the wheel, I will share his work (with due credit of course!):

Felix’s excellent diagram depicts all of the components discussed above:

- Portal infrastructure facing both administrators (core IT, tangential support staff) and consumers (developers, business analysts, etc) This portal presents both a central service catalogue dynamically presented based on entitlement, and system health via dashboards similarly presented dynamically based on who is asking

- A centralized configuration management construct which is well rationalized and contains a comprehensive configuration management, and event consolidation, repository for the entire service delivery platform

- An abstracted and virtualized, collection of foundation resource domains (compute, network and storage) with allocation and consumption controlled by an orchestration layer which ties directly in to operational systems

With a next generation infrastructure as described above in place, traditionally difficult tasks like compliance auditing, runbook automation, and monetization (via charge back or show back) are made significantly easier. There is dramatically improved efficiency of capital asset utilization as well, and the entire system can be made even more economically efficient through hybrid models spanning out to public cloud services. The more mature an organization is in terms of service automation, the more able they will be to maximize the value of public cloud utilities.

So what is VMware up to in this important space? Quite a lot as it turns out. There are a series of products, some legacy that have iterated, some new, that define their forward strategy in ITSM:

- vCD – vCloud Director, the reason for this entire series, is the multi-tenancy, “private cloud”, abstraction component of the VMware portfolio. As discussed, the idea of vCD is to provide a more flexible construct for the allocation and consumption of virtualized resources

- vCO – vCenter Orchestration, the focus of this particular entry, is the workflow automation engine that has been around for a bit now. The purpose of vCO is to automate common functions within vCenter through the creation of workflows that can then be catalogued and repeated. In this sense it is a workflow orchestrator, but one very much tied to the vSphere centric view of things. Still, given that within a virtual datacenter the vSphere view of assets could very well encompass the entire estate, this isn’t necessarily a shortcoming. In any event, it is a core piece of plumbing that is important to understand

- vCAC – vCloud Automation Center is a piece that I actually won’t be covering as part of this series, but is extremely important nonetheless. vCAC is the up-the-stack orchestration component providing service entry point portal capability as well as automation of vCloud tasks. For anyone who understands the Cisco entries in this space (the Cisco Intelligent Automation for Cloud framework), vCO would map roughly to the type of capabilities provided by their Tidal acquisition , while vCAC would be more analogous to their Newscale acquisition. The interesting thing to consider is if there is a synergy story between vCO and vCAC and, if there is, what that integration looks like

- vCOPs – vCenter Operations Manager is the heart of the operational component of the service delivery platform. A well rationalized operational platform is a critical component of an ITSM and integration of legacy systems is often quite painful. This is a tough decision to make, consolidation into existing legacy systems vs introduction of a new operational platform, but in my opinion it is smarter to bet on the latter based on the assumption that ultimately the former is going to be marginalized over time as assets shift away from legacy systems and into the hybrid cloud platform. In addition, the integration story between a cloud centric operational platform like vCOPs (with an API centric devops focus) and public cloud platforms like AWS CloudWatch or ServiceNow SaaS monitoring, is likely to be smoother

With the above quick and dirty primer on ITSM in mind, let’s go ahead and look at the setup and configuration of vCenter Orchestrator. vCO installs as part of vCenter, and should be available on the Start Menu under VMware:

The first step is to run through the vCenter Orchestrator Configuration utility to ensure that the service components are setup. This utility is actually a web portal running on port 8282 on the local server. I found that running Internet Explorer 10 on Windows 2008 R2 required compatibility mode for the vCenter Orchestrator Configuration Utility to render correctly. This is important because if it doesn’t render correctly it will be very difficult to configure. If the view matches the screenshots below, then everything is fine. Be aware that the screenshots are depicting a working end-state. In an actual new install, the green light indicators next to each functional area would actually be red triangles. As the service components are successfully configured, the red triangles are replaced with the green circles. Logging in the first time, there will be a prompt to change the password from the default (login: vmware, password: vmware) before continuing. With the password changed we can walk through the configuration items one step at a time. The “General” tab (I’ll call them tabs for simplicity even though they aren’t) shows the current system install path, version and status:

The first bit to configure is the networking details. Here we confirm the IP address, hostname and TCP port configuration for our vCO server instance. On a onebox config this will be the vCenter server info, but if vCO is split off obviously the appropriate info should be entered:

The other important piece of the network configuration section is the “SSL Certificate” tab (this one is a real tab). When using self signed certs, or private certs, without a configured enterprise root trust, you end up with continual certificate warnings and with systems that connect to each other silently, this can break interoperability. To circumvent this, utilities will often have a step where certs are imported or “pre-validated”, so the questionable authority can be acknowledged and accepted once by the administrator, and then ignored by the system from that point forward. Here we are importing a cert for the vCO server to use for it’s own secure communication. I went ahead and imported the default VMware cert from the vCenter server:

The next step is to configure the LDAP connection for authentication services. I have vCenter pointed at Active Directory so, in this case, we enter the Active Directory info here. After setting the “LDAP client” drop down to “Active Directory”, I set “Primary and Secondary LDAP host” to two of my domain controllers. The standard port will be the global catalogue port (3268), if “Use Global Catalogue” is selected, or the standard LDAP port (389), if not. The LDAP root should be set to the fully qualified domain path of the forst root (DC=XXXX, DC=YYYY) :

In addition, credential information will need to be entered to establish the connection (browse access to the referenced domain) and vCO also needs the default container for both users and groups. The last bit of information required is the path to the AD group that will be used for granting vCO admin rights. An existing group can be used (granting vCO admin to everyone in it), or a new group can be created (recommended). The last options, settable via checkbox, govern LDAP search behavior:

- Dereference Links – all links are followed before a search is performed

- Filter Attributes – applies attribute filters on search results

- Ignore Referrals – disabled referral handling

Finally, the host reachable timeout can be set. The default is 20 seconds. This setting determines how long the test should allow before determining that the host is unavailable.

If all LDAP info has been entered correctly, the configuration test will pass and the status will flip to a green light. If there are errors, the bottom panel will indicate what they are (likely errors are credential issues or typos in pathing). With LDAP configured, the next step is to set up the database connection. The first step is to configure the “Database Type”. Options available here are Oracle, SQL Server, or PostgreSQL. After the database has been selected, credential information for the connection must be provided. A remote or local database instance can be used. In my case, I chose to use the existing SQL Express interested already being used for vCenter. First I downloaded the SQL 2008 R2 Management Studio Express, then connected to the instance and added a database:

This is basic SQL server admin stuff. Enter the credentials for the local server/instance and then under databases, simply create a new blank database. In this case I followed the naming convention of the other built-in VMware databases: VIM_XXX. We can now configure vCO to use this new server/instance:database:

Enter the same credentials, server and instance info used in the step above and enter the default SQL Server port (1433), unless SQL has been configured to run on a different port in which case that custom port definition should be used. After applying changes the green light will appear if everything has been entered correctly. Again, common problems would be the connection string (misnaming the server or instance), or credential issues (insufficient permissions, incorrect PW, etc).

Next up we configure the vCO “Server Certificate”. In my case I opted to create a local certificate database and generate a self-signed server certificate. Enterprise PKI implementations, or public PKI implementations, would take the opportunity here to import and existing certificate db and certificate signing request:

Moving along, we are now up to the “Licenses” configuration. This one caused me some trouble for some reason. There are two options here:

- Import the licenses from vCenter via URL

- Manually add the licenses by entering the license key (required pre 4.0)

For whatever reason, the first option to import licenses via URL did not work. No matter what I tried, I could not get around this dreaded error:

Error connecting to: https://server:443/sdk.

Error No licenses No licenses entered.

The options here are pretty straightforward. You enter a server name, credentials, TCP port and path. I tried everything for server name: localhost, IP address, fully qualified domain name and short name. For credentials I tried everything as well: administrator@domain, domain\administrator, admin@system-domain. I left the TCP port as SSL (443) and left the path set to the default (/sdk). I verified that the /sdk path was working by browsing to the WSDL: https://win-8s8ff19a7rj/sdk/vimService.wsdl, and also verified that the license was valid by double checking vSphere client and also connecting via PowerCLI to the vCenter. Hitting a wall at every turn, I went ahead and did the manual entry approach. I simply entered the vCenter license key under “Serial Number” and the server name under “License Owner” and, as if by magic, that worked:

With the license issue finally resolved and flipped to green, I was able to move forward to “Startup Options” and start the service. I went ahead and configured vCO to install itself as a service and start with the system:

As a final step we can configure the vCO plugins and their installation status, as well as add any additional plugins via upload:

With all systems showing a green light we now have our orchestration framework in place! The next step would be to go ahead and launch the vCenter Orchestrator Client, create some workflows (or utilize the canned ones), and test some automation magic. Hope you enjoyed this Orchestrator Intermission! The next entry will pick-up where we left off with the vCD setup.