Lots of VMware activity at Complaints HQ lately as these pages have hinted. Coming off the whirlwind of chaos that was VMWorld 2013, I’ve been hitting the home lab hard and getting it into shape so I can test all of the new bits coming down the pike (as we all know this is a big release year!). In the last entry we talked about the Datacenter Extension (DCE) capability provided in the vCloud Connector by selecting “stretch deploy” on a properly prepared and qualified vApp. Just as a refresher, these are the pre-requisites:

vSphere

- A working vCenter implementation with vDS (virtual distributed switch is a must have)

- vShield Edge deployed in vCenter with one internal interface connected to the VM port group and one uplink interface connected to a port group that can get external egress

- vCloud Connector Server deployed

- vCloud Connector Node deployed and configured to point to the vCenter, then registered with the vCC Server and stretch deploy settings configured

- A powered off VM with a single network interface connected to the port group the VSE is on

vCloud Director

- A working vCD and all of the pre-req’s that implies (provider vDC, organization, org vDC)

- A routed org network

- A vApp with it’s VM connected to a routed vApp network (this part is key)

- vApp powered on, VM powered off (also key)

- vCloud Connector Server deployed

- vCloud Connector Node deployed and configured to point to the vCloud, then registered with the vCC Server and stretch deploy settings configured

vCloud Hybrid Service

- A vCHS account and either a virtual private cloud or a dedicated cloud

- An edge gateway deployed and configured with a public IP address

- Admin privileges on the account being used

- A vCloud Connector Server deployed

- On prem, the vCHS public vCC node registered with the vCloud Connector Server and stretch deploy settings configured

- To register the public node you use the URL of your organization on https port 8443. It will look something like this:

https://pXXvYY-vcd.vchs.vmware.com:8443

- In addition to the URL, you will need the name of your org (the actual name and make sure to match case) and your credentials (remember you need infrastructure and network admin roles)

- To setup stretch deploy you will reuse the same vCHS credentials

One important thing to note for all of these is that before you go to the vCC server and register for stretch deploy, you must visit vCenter by way of the vSphere client and setup the vCloud Connector plugin environment. So the order of execution is this:

- Setup core infrastructure

- Register all nodes

- Connect to vCenter using vSphere client (not web client, must be legacy)

- Verify that vCloud Connector plugin is enabled

- Visit Home->Add-ons->vCloud Connector

- From “Clouds”, right-click and add your environments. You will be adding both the vCenter here and the vCHS or vCD as well. Each configured node that has been registered with the vCC server will be prepopulated in the list if your config is good, so this is a very easy step. You just need to re-enter your credentials one more time for validation

- Once the clouds have been added and their data loaded, you return to the vCC Server

- At this point you can enter the stretch deploy settings

- Returning to the vCC plugin, any qualifying vApp/VM will now have its “stretch deploy” option available

Now ideally at this point you can click “stretch deploy”, fill out some basic info, and be off to the races copying an existing VM up to a cloud (vCD or vCHS) with it’s IP info intact and an SSL VPN magically created in TAP mode extending the layer 2 domain of either your vSphere vDS port group or the vApp network. The basic info required and the steps DCE performs are:

- Target cloud – where you are sending the vApp/VM

- vApp name – DCE will create a new vApp construct in the destination to hold your vApp/VM. This is the first step of the infrastructure preparation phase (which is phase 1).

- Catalog name – DCE requires deployment via the catalog facility – so the vApp/VM will be templatized into the catalog and then deployed from there. This is just “one of those things”(tm)

- Organization name – the name of the org in the target cloud to which we are deploying

- Organization network – the network the vApp will be attached to (remember, a new vApp is being created with a vApp network which will be routed to this org network entry). The org network list will be populated with any org networks that have been associated with an available gateway

- Public IP – you can select a public IP from the suballocation range available on the gateway, or specify one. The public IP will be used for the creation of NAT rules (both source and destination) if the VM requires external connectivity directly from the cloud network. The alternative would be tromboning back through the tunnel to the on-prem edge router for egress

The above info is all that is required to get the workload migrated. After the base constructs are created (vApp, vApp network, firewall rules), an SSL VPN will be setup between the cloud services vShield Edge endpoint and the on-prem vShield Edge endpoint connected either to the vSphere port group or the vCD org.

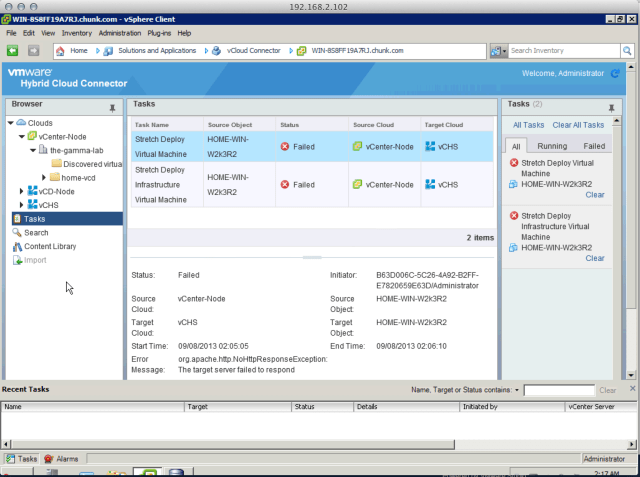

Of course this is all very quick and smooth (be sure to calculate upstream bandwidth though, when assuming just how quick migration of a multi-gigabyte file by way of the public internet will be!) It’s all quick and smooth until…. It isn’t! It looks like something in my environment is not healthy. Behold Murphy’s Law:

As you can see, things didn’t go so well for me! Not a surprise since the lab is in a constant state of flux. The good news is that this will give me a great opportunity to dive deep into the mechanisms in play here and share what was wrong and what it took to resolve it. Hopefully this will be useful to anyone who may find themselves with a similar misconfiguration in their own environments once they are ready to take the DEC path!

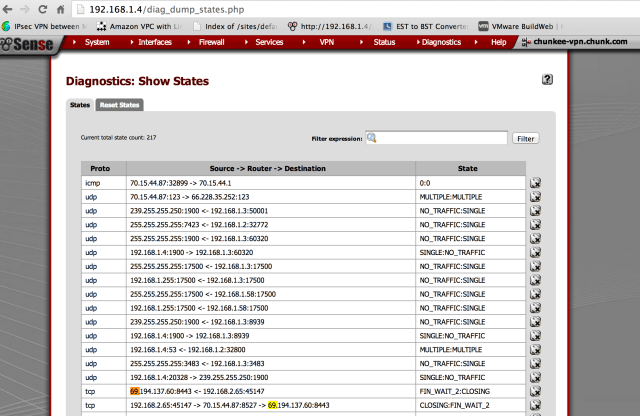

The above generic looking http timeout error occurs whether I attempt a stretch deploy from vCD or vSphere. In addition, pfSense (my edge firewall) shows nothing unusual in the log, just standard TCP connectivity between the vCC Server and (in this case) vCHS:

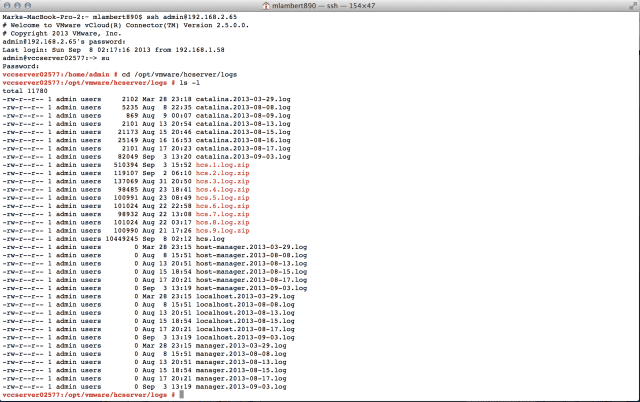

After playing around a bit and realizing you can only get so far in the GUI in terms of peaking under the covers, I decided to just dive into the deep end and see what the heck is going on from the vCC Server point of view. Luckily, these appliances can be enabled for remote access and are just an SSH away:

Some explanation is required here. You can SSH into the vCC server using the admin creds which you set. You can elevate to superuser by using the default root password which is “vmware” (which you can also change… hint hint). Once you’re in, the key path we’re looking for is:

/opt/vmware/hcserver/logs

As you can see this is quite a busy path with lots of juicy logs. The biggest most recent one is usually a safe bet, so let’s have a look at hcs.log:

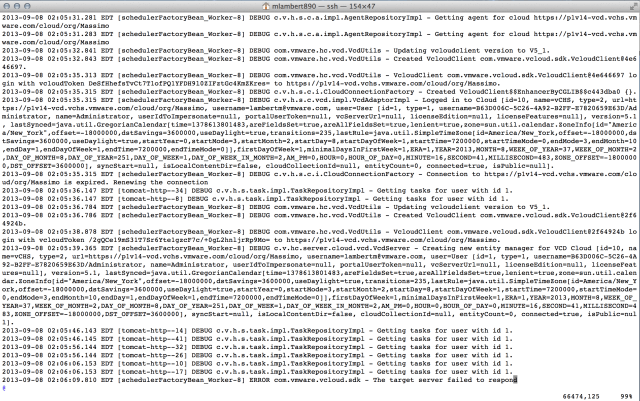

As busy logs go, this is a busy one! If you take a deep breath, and take a careful look, though, you can see that things are going quite wrong. If you remember from the first screen cap, there were two tasks that failed. The first was Stretch Deploy Virtual Machine (this one is the overall task) and the second was Stretch Deploy Infrastructure (this is the phase 1 preparation piece where the vApp is being created, etc). The overall task made it to 2%, the second task made it to 10%. If you look above, you can see the following:

DEBUG c.v.h.s.task.impl.TaskRepository.Impl – Getting tasks for user with id 1.

This entry shows up in two sections. In the upper block starting at 02:05:36.147 we see two “Getting tasks” entries before the agent moves forward to “Updating vCloud Client Version to 5_1”. I believe that this represents the instantiation of the overall task (the one that makes it to 2%)

Looking down a bit, after a big REST API response, we see 6 “Getting task” attempts before the agent times out and the tasks error out. The second task failing errors out the first and the house of cards collapses.

Now in the interest of full disclosure, I have no idea what the heck is going on here. I do luckily have some good connections and have reached out for additional insight. I will get this solved and will keep this entry alive until we have documented what went wrong and how to fix it! In the meantime, I did do the basics and just verified that the vCHS cloud endpoint is in fact reachable from my vCC Server:

Signing off for now, but watch this space for the solution to this mystery! And of course if anyone is experiencing this as well, or has a suggestion, let me know!