Background

For a brief moment we all lived in a Golden Age of transcoding. CPUs with power to spare, mature open source libraries supporting GPUs with even more power to spare, native libraries optimized for those GPUs getting adoption, and IGPs, long considered useless for pretty much anything, suddenly enjoying a Renaissance thanks to Quick Sync. Hell, even mobile chipsets have matured enough to get the job done. Of course the caveat here is this all assumes 1080p and H.264; aka “HD video”. Which was great… While it lasted. Just as we were lulled into a false sense of security, along comes 4k and H.265 to ruin everything!

At 3840×2160, 4k is at best 4x the workload of the 1920×1080 pixel count of 1080p. Unfortunately, it gets even worse though. See pixel counts aren’t the full picture (pun intended), a pixel isn’t much good without color data. Most 1080p content is 8 bit, meaning 1 byte of color data for red, green and blue, 24 bits (3 bytes) total. For 1080p, that means a single raw frame is 1920x1080x3=6220800 or roughly 6.2MB. 4k, on the other hand, is typically 10 bit color which means 30 bits (4 bytes rounding up) total. That means 3240x2160x4=33177600 or roughly 33.1MB. Yeah, that’s a really bad 5.3x increase! Suddenly the hardware isn’t looking so shiny any more!

Test Setup

With 4k content becoming slightly more common, the time seemed right to quantify just how bad things had gotten. For the test a BluRay rip of Prometheus was chosen. Here’s the gories on the source file:

BluRay rip, 3840×1600 source resolution (odd aspect ratio), 10 bit YUV color model, encoded at 16Mb/s using H.265(HEVC) into an MKV container. Audio track includes multiple languages and total file size is a wopping 18.8GB.

The goal of the project was to reduce the size of this beast to under 4GB by applying the following transformations:

- non English audio tracks stripped

- non English subtitles stripped

- bit rate reduced to 12Mb/s

To accomplish the task, the Gaming Beast and the Beast Server were going head to head. Specs are as follows:

| Gaming Beast | Beast Server | |

| CPU | Intel Core i7 7700k, 4 core@4.5Ghz | Intel Xeon E5-2630v4, 10 core@2.2Ghz |

| RAM | 32GB DDR4 3200 | 128GB DDR4 1866 ECC RDIMMs |

| Disk | Toshiba 500GB NVMe | 4xSamsung EVO 500GB SATA3 SSD in RAID10 |

| GPU | 1080Ti SLI (only one GPU supported for xcode) | GTX Titan X Pascal (not Xp) |

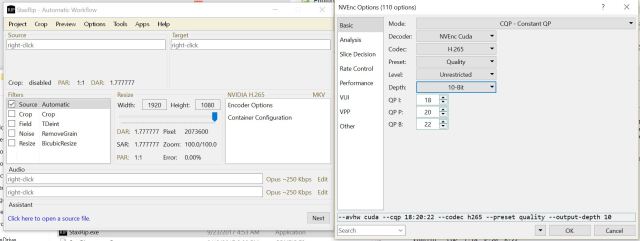

On the NVidia systems, the NVidia H.265, NVEnc Cuda Decoder was utilized for maximum GPU efficiency:

Results

The following scenarios were tested:

- 1080Ti – GPU xcode performance

- Xeon E5 10 core – CPU xcode performance

- GTX Titan Pascal – GPU xcode performance

The results were a bit surprising. First, let’s do a spoiler alert and start with the champion. We give you the mighty 1080Ti! The 1080Ti SLI system was able to chew through the xcode task at 128fps and completed it in 22 minutes while also managing to run through Unigine Heaven 4.0 with 4k/max settings at an amazing 53fps! Really a crazy result!

Transcode in progress:

Heaven running at ludicrous speed simultaneously:

Moving on to the Xeon, we have the second place winner; not surprisingly, the GTX Titan X Pascal managing 125fps and getting the job done in a still impressive 26 minutes:

So it looks like the GPUs can manage 2 4k transcodes per hour, which while a far cry from the lightning fast performance of1080p streams (~5 minutes), is still damn good. Unfortunately, last and really very much least, is the brute force pure CPU method. Here we finally see apocalyptic results with the Xeon only able to manage 3.7fps and needing a whopping 14 hours!

This was a great test because it proved that in the world of H.265 and 4k transcoding, investing in a GPU pays big dividends and even very core dense parts like the E5 10 core can’t make up the gap.