Well I finally got around to getting VXLAN enabled in the lab and oh what fun it was! It seems like there is something fishy somewhere in my setup that lead me directly to a condition that has happened to some other folks during the VXLAN install. What happens is that the Preparation phase in vShield Edge stalls for one or more clusters after the status sits at yellow for quite some time, and then eventually times out to red with a cluster status of “Not Ready”. Not quite sure what causes this, but it can send you into a hell of endless reboots of hosts and re-preparation steps and even one set of hosts being green while others remain red.

As a review, these are the steps that the VXLAN prep needs to do:

- deploy the VXLAN VIB from the vShield Edge server (where it sits in a download directory in theory) to each host and enable it

- Create a new vDS port group set to the VLAN you specify during setup in the vShield Edge, Network Virtualization, Preparation wizard

- Create new vmkernel virtual NIC’s to connect to this new port group on each host being prepared

- Configure up the VLXAN bits (associate the host to the cluster, etc)

Some things to be aware of in advance:

- The new vmkernel NIC needs an IP. The wizard creates it with DHCP. If you do not have DHCP in the physical VLAN you are dropping these new vmk’s into, then they will be stuck with autoconfig addresses (169.254.0.0) and you will need to go manually reconfigure them by editing their properties under the IP Settings tab after choosing “Edit” for the appropriate vmkernel NIC under the “Manage Virtual Adapters” link which is part of the “virtual distributed switch” section of the hosts Networking config (phew!)

- The NIC aggregation method for the new VXLAN overlay switch matters. You can only select this once during initial preparation in the wizard. The idea here is that anytime you are creating a logical layer 2 domain that sits on top of multiple physical adapters (acting as uplinks) you must configure the operational mode of these physical links. They can either be aggregated into a channel or set as discrete failover linked. Options are:

- failover – no teaming at all and is the most universally workable config that is almost surely the way to go in a home lab.

- LACP active/passive – Link Access Control Protocol. This is a standard protocol by which port channeled ethernet adapters can negotiate and form channel groups. It’s basically the NIC teaming control protocol. Active means that adapters initiate as well as listen, passive means they just listen. A whole group of purely passive mode adapters won’t do anything, in other words. LACP configuration requires an underlying physical switch that supports LACP

- Static Etherchannel – preconfigured ethernet channel on the underlying physical switch to which the pNICs that are serving as the uplinks to the vDS that the VXLAN is being associated with are connected.

I thought I’d capture some of the important remediation tips that can help you keep your sanity! First, the best “one shop stopping” for checking what’s going on, and also for reinitiating the VIB agent install (which seems to be the phase that tends to go wrong) is the vCenter Solutions Manager (found off of “Home” in vCenter) and the vSphere ESX Agent Manager Management tab:

This UI really is your friend and can save you lots of trouble. It will show you exactly what the prep is doing, give reasonably good detail when things go wrong and, most importantly, allow you to initiate the VIB Agent install step again by clicking the refresh icon on the vShield Manager solution. In terms of some deep correction steps that may become necessary depending on what goes wrong, the following are what I found the most valuable:

domain-xxx has already been configured with a mapping

This happens when a preparation was partially successful and is annoying. It makes it impossible to back out a broken config and start over because the wizard will stall here. Luckily, the fix for this is only an API call away! Just head to a machine that has network connectivity to the

curl -i -k -H "Content-type: application/xml" -u admin:default -X DELETE https://<vsm-ip>/api/2.0/vdn/map/cluster/<domain-cXXX>/switches/dvs

Agent VIB module is not installed

This error will pop up in vCenter. I never quite figured out why, but some things to verify first:

- Under the vCenter Solutions Manager, make sure the Agent Manager status is healthy (mine was)

- Make sure that the VXLAN.zip file is actually available on the vShield Manager and accessible from vCenter at:

- Verify that the hosts are healthy, have connectivity on their management networks, are seen in vCenter, etc (table stakes stuff, all green on my install)

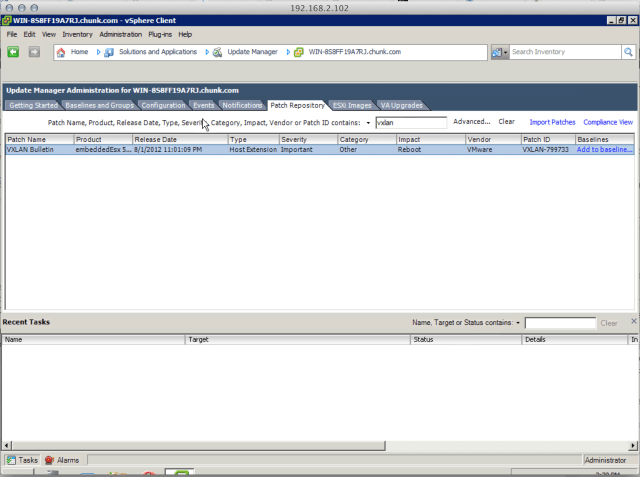

Despite all of the above boxes being checked, it’s actually still possible under some strange mix of conditions to have the preparation fail continually because the agent is not installed. What I ended up doing here is just manually installing it. The first step is to either get the module from the vShield Edge (VXLAN.zip), or get it from vCenter Update Manager. It can be downloaded as part of this bulletin:

Once you have the file in hand, the next step is to get it somewhere accessible to each host. Either locally on the volume or on a share that is accessible off of the /vmfs/volumes path. The next step is to do a good old manual CLI install!:

esxcli software vib install –depot=”path to vxlan.zip file

After manually installing the agent, make note of the status and if it requires a reboot:

If the install worked ok, return to the vCenter Solutions Manager and refresh the vShield solution status. Returning to vShield Manager preparation and refreshing that, you should see the status go to green. Failing that, the log in vCenter should at least show the stage moving forward.

In the end, the install can be a bit hairy, but I did get it working so don’t give up even if you home lab is a mess like mine!

UPDATE: so in reviewing lots of logs from the ESX hosts, the VSM, and my physical switch, I have come to the conclusion that my initial troubles all started with trying to get a working LACP setup going and then compounded from there.

Another issue I discovered (and I should have realized this one) is that the vxlan.zip VIB module is actually distributed by vCenter to the individual hosts. My DNS actually runs virtually (AD) on ESXi and, as I was experimenting with preparation, at various points my DNS was offline. This will definitely cause an issue with the preparation workflow even if the VIB was installed manually in some cases. The symptoms are these entries showing up in /var/log/esxupdate.log:

2013-09-15T00:22:46Z esxupdate: esxupdate: ERROR: File "/build/mts/release/bora-1157734/bora/build/esx/release/vmvisor/sys/lib/python2.6/site-packages/vmware/esx5update/Cmdline.py", line 106, in Run

2013-09-15T00:22:46Z esxupdate: esxupdate: ERROR: File "/build/mts/release/bora-1157734/bora/build/esx/release/vmvisor/sys/lib/python2.6/site-packages/vmware/esximage/Transaction.py", line 71, in DownloadMetadatas

2013-09-15T00:22:46Z esxupdate: esxupdate: ERROR: MetadataDownloadError: ('http://WIN-8S8FF19A7RJ.chunk.com:80/eam/vib?id=f553e82e-868f-4884-a7d9-86cde7a10d0b-0', None, "('http://WIN-8S8FF19A7RJ.chunk.com:80/eam/vib?id=f553e82e-868f-4884-a7d9-86cde7a10d0b-0', '/tmp/tmplFItQC', '[Errno 4] IOError: <urlopen error [Errno -3] Temporary failure in name resolution>')")

2013-09-15T00:22:46Z esxupdate: esxupdate: DEBUG: <<<

Here is how things look on a successful run:

2013-09-15T00:25:15Z esxupdate: vmware.runcommand: INFO: runcommand called with: args = '['/sbin/bootOption', '-rp']', outfile = 'None', returnoutput = 'True', timeout = '0.0'. 2013-09-15T00:25:15Z esxupdate: vmware.runcommand: INFO: runcommand called with: args = '['/sbin/bootOption', '-ro']', outfile = 'None', returnoutput = 'True', timeout = '0.0'. 2013-09-15T00:25:15Z esxupdate: downloader: DEBUG: Downloading http://WIN-8S8FF19A7RJ.chunk.com:80/eam/vib?id=f553e82e-868f-4884-a7d9-86cde7a10d0b-0 to /tmp/tmpj2h2ug... 2013-09-15T00:25:15Z esxupdate: Metadata.pyc: INFO: Unrecognized file vendor-index.xml in Metadata file 2013-09-15T00:25:16Z esxupdate: vmware.runcommand: INFO: runcommand called with: args = '['/usr/sbin/vsish', '-e', '-p', 'cat', '/hardware/bios/dmiInfo']', outfile = 'None', returnoutput = 'True', timeout = '0.0'. 2013-09-15T00:25:16Z esxupdate: esxupdate: INFO: All done!

The advice here is that upfront homework is critical with VXLAN. Know for sure that your base environment is planned out and ready (easier in a real corporate setting with fully functional gear then in a lab with duct tape) before you begin. When in doubt stick with the easiest config (in this case basic failover as the NIC team policy!) and make sure all of the core plumbing is running (like DNS!)