Well I decided that a hosted desktop would be cool for the new lab (especially since I’m going to provide VPN access to friends and colleagues as a favor). Of course the easy way to do this would be to just install a desktop VM right? Of course. But we don’t do things the easy way here at Complaints HQ! If we did, we’d have nothing to complain about! So what was my solution to the hosted desktop requirement? Well install VMware View of course! I haven’t had a chance to document the full experience yet, but before I even get started I want to provide one little tidbit that drove me crazy this weekend. VMware View has multiple components and concepts worth getting to know and understand:

Concepts:

- VDI – this one is a no brainer right? Desktop OS images that run on a server and are accessed by users remotely who run some sort of client software (VMware View client), using a connection protocol (PCoIP for VMware, RDP for Microsoft, ICA for Citrix, etc) to send screen images back and forth from the Windows, Mac, Android (etc etc) computing device.

- Linked Clones – storing tons of desktops for users takes a lot of space. And not cheap Dell commodity SATA2 drive space either. Expensive, possibly SSD backed, server blade space. Since Windows X.Y is pretty much 90% of the same for Z users (you don’t need 900 copies of GDI32.DLL in other words) there is an opportunity to be more storage efficient. The concept of “Linked Clones” takes the “gold master” approach and makes it real-time and dynamic. You load up one master Windows image, you spin off a number of replicas of it, and as users order up desks, any deltas from the standard are stored temporarily in small, dynamic, clones linked to a replica. Saves tons of space and also allows for smart placement of the active bits of an OS volume (so you don’t need to spend zillions on SSD to get the infrastructure to be performant)

- User Profiles – a chunk of the data that is unique in a set of images is the user personalization data. There are a number of ways this can be managed, but the idea is that most VDI systems provide some way of managing it. The sledge hammer way is to persist entire images forever (the essentially static 1:1 relationship), the elegant way is to use the Linked Clone approach for the common OS bits and take user profile and persist it in a separate storage location and draw any needed associations via configuration metadata.

- Desktop Pools – since the idea of VDI is to manage lots of virtual desktops at scale, and to provide different tiers of service and possibly different architectures (maybe some power users do get the 1:1 permanently persisted desktop while call center workers, for example, make due with the near fully commodity “built on demand” desks), an extensible management structure is needed. The concept of pools provide this flexibility. A “pool” is essentially a set of configuration options with together for a service catalog entry.

- Entitlements – lastly, since we want this all to be easy for users, the idea of entitlements is taking a user identity (typically from AD when we’re talking about Windows users and Windows desks) and associate it with some level of configuration and a designated pool. This way when the call center worker logs in they just get presented a desk without having to think, and the same goes for the power user. The fact that they have taken two very different paths to that login screen should be completely invisible to them.

Components:

- View Connection Server – this is the connection broker. Basically, think of the connection broker as your workflow orchestrator and service catalog for your Virtual Desktop Infrastructure. Without a connection broker, you’d have to create and deploy individual desktop VMs for every user and then provide them direct login info. The connection broker components allow you to fully automate this process as well as provide other awesome and advanced features like dynamic setup and teardown of desktop images, full customization and linked clones for desktop images for storage efficiency (and thats just naming a few!). This is clearly the heart and soul of View. The Connection Server pretty much requires a stand alone install, uses a web front end for configuration on standard https, and is the point from which you manage all of the other (pretty much headless) components. The install is part of the “connectionserver” package.

- View Security Server – this box is basically a front-end proxy for the Connection Server designed to be dropped in a DMZ. These can scale out in a non-fixed ration of SS:CS. Important for real deployments at scale that plan to advertise desks directly to users over the internet. This is also a stand alone box pretty much by design since it should sit in the DMZ. The install is also part of the “connectionserver” package (it is a selectable role)

- View Composer – this is the component that provides the automated provisioning management and the aforementioned storage efficiency. It’s important, it runs headless, and it is configured through the View Connection Server UI (the “VMware View Administrator” portal). It doesn’t necessarily need to run stand alone. By default it needs to run over SSL at port 18443.

- View Transfer Server – transfer servers manage the dynamic aspects of end-user desktop subscription and, as a result, are designed to naturally scale out. The Transfer Server is basically a web server with code that handles the check-in and check-out process of a user requesting, being assigned, and releasing desktop instances from the pools of desktops that you create (the dynamic setup and teardown aspect whereby desktops are “built to order” and then destroyed when no longer needed). For some unknown reason it runs on Apache for Windows (?!) at port 80 and should be ok as a shared component.

And that brings me to my heads up. I attempted to “one box” the View Composer and the Transfer server, mainly because having 50 VMs to handle the launching of 3 desktops seems pretty ridiculous, and I hit a wall whereby the transfer server became “bad repository” no matter what I tried to do. Here is what the issue turned out to be:

What do these two screenshots mean? They mean that it seems IIS was stepping on Apache. I believe IIS got installed (and activated) as part of the .NET 3.51 feature addition (required by Composer) and of course at that point took over port 80 and killed Apache. This also caused Apache to shutdown. Disabling IIS and restarting Apache did the trick. Interestingly, Composer still seems to work. I am not sure why, however. I know that Composer does not use Apache, but it may not use IIS either. It may be a stand alone app listening on port 18443 and just utilizing IIS as a certificate generator. We shall see! On a side note, it would be really nice if VMware rationalized their supporting technology infrastructure. So not Oracle and SQL Server and Postgres and Java and .NET and IIS and Apache, but rather just one from each category. Hopefully this will happen eventually.

UPDATE: it looks like the one-box is working and that IIS and Apache should be configured to co-exist on the one box. More on this below

Handy Checklist to Avoid the Gotchas:

Lots and lots has been written on View and there are plenty of end-to-end walkthroughs. So instead of pushing another of those onto the stack, I thought it might be more useful to put together a handy list of things to keep in mind when doing View testing. I also plan to keep this a living list as I discover new and potentially interesting things.

- One Boxing – As mentioned above in the components sections and the specific example of Transfer Server and Composer co-existing, it is possible to one-box certain bits, but there are a few things to keep in mind. The first is that certain components do naturally separate and, even in a lab setting, it is best to keep them separate (Connection Server and Security Server is the example here). The second is that many View components are web services and, unless you get into complex port customization, can’t necessarily co-exist. In particular the Transfer Server and the Connection Server both want port 80. What I’ve found to be the minimum footprint is as follows:

- Connection Server – this guy should stand alone just to keep your sanity

- Security Server – this guy is optional, but if you need one for your testing it should be kept alone and kept on a separate VLAN to simulate a DMZ

- Transfer Server – this guy should also be kept separate because it wants port 80 and therefore can’t co-exist with the Connection or Security servers without customizing ports. Alongside the Transfer Server, however, the Composer can be collocated.

- Database Server – View likes to consume databases. It is probably best to stand up a SQL database (or use an existing one) rather than attempting to use SQL Express. Note that you cannot use anything newer than SQL 2008 R2. The databases that are relevant here are the main View Connection Server database, the View Composer database and the Event database.

- Master Image Prep – A default Windows installation is not ready for usage as a View master image. There is a checklist of items that must be done in order to make a Windows install a valid template:

- Make sure the vNIC is set to VMXNET3

- Make sure that PCoIP is configured in the firewall and allowed for the appropriate networks (this part is significant as the firewall rules in Windows are based on “domain”, “public” and “private”. Consider your network path for clients carefully)

- Set the Windows configuration to DHCP

- Activate

- Join the domain

- Disable power options for screen (set screen turns off to “never”) for PCoIP

- Disable hiberante

- Install VMware tools

- Install the VMware View Agent (make sure to install 5.3.1 for Windows 8! Earlier versions will not work)

- Take a snapshot

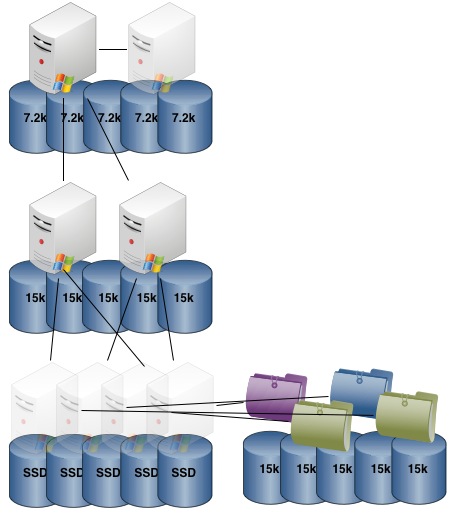

- Storage Placement – we touched on this above in discussing Linked Clones as a concept, but it is worth expanding on this topic as we consider on which datastore each type of file should be placed. Consider that View desktop storage is hierarchical. At the most static, we have a master image (also known as the “Parent VM” in View admin parlance) and a snapshot. These provide the source for a given desktop version. Changes made to this master file set will propagate to any children provisioned from them. These are full size (meaning a full desktop and a full snapshot) and are accessed only during initial provisioning of replicas so can be kept on a datastore backed by high capacity, lower IO, storage. One level out from the Master Image we have the Replicas. The Replicas are really the “working bits” and are the image from which Linked Clones are dynamically provisioned. Replicas are full sized, are accessed frequently, but are read only. From the Replicas, Linked Clones are built. The Linked Clones are disposable (used for the life of a desktop instance then discarded), but are accessed heavily in the read/write mix typical of a desktop OS. They contain the dynamic parts of a desktop image that must be changed, or bits that have changed for a given session. Personally I think that, if budget allows, all VDI should really run off SSD in production. That said, there are some minimums worth considering:

- Master Images can be stored on NL-SAS/7.2k SATA arrays

- Replicas should be placed on either SSD or SAS/15k SATA arrays

- Linked Clones should be placed on the highest IO storage you can afford (SSD strongly preferable)

- Profile data can be placed on NL-SAS/7.2k SATA arrays although 15k of course can’t hurt

One final point of note is that all of the above does apply very specifically to Linked Clones. With Dedicated Instances, each desktop is a full copy of the Master Image. As a result you need both capacity and high I/O for storing dedicated disks. I prepared a quick diagram to help visualize the Linked Clone storage relationship. The file folders represent user profile data being persisted on a separate class of storage so user profile state is maintained across generations of ephemeral desktop:

- Certs – you will see all kinds of warnings regarding using private certs and not having an enterprise PKI with a configured trusted roots and CRLs. You can ignore them. While a proper PKI infrastructure configured correctly at the client level is certainly a better user experience in production, private untrusted certs work just fine for testing. You’ll just see the usual warnings at the access layers and errors in the View event logs.

- DNS – DNS is a bit trickier. VMware products are often picky about DNS vs IP access in places where one was used during initial configuration. With View I observed that the OSX client really wants to access the View Connection Server by name. The Android client, on the exact same infrastructure, was fine connecting via IP. In my opinion the safe bet here is to populate your local DNS with the FQDNs for each View component (this is very easy if you are using your AD DNS as primary since they’re all domain joined). Exception would be the security server which should be populated into your public DNS in cases where production access via the Internet is being allowed.

In any event, this entry is just getting started so stay tuned!