Ah, the joys of revisiting a complex configuration that you haven’t touched in 6 months… Few things can match it! It was sobering to realize I had no clue what state I really left my vCenter in the last time I powered it down. Given the types of things I generally test, I definitely should start keeping a log (and maybe using the “passwords on Post Its” method favored by end users the world over!) Since I couldn’t locate my ESXi 5 DVD for some reason, I decided to download 5.1, install it on the new hosts, upgrade the old hosts and then tackle a vCenter upgrade and see what happened from both a licensing and technical standpoint. Sure you can do a bunch of research on Google and get a sense of how things would go, but there is no substitute for breaking your lab badly when it comes to really learning what to do (and not to do) for major upgrades.

Things started off pretty easily. Download vSphere 5.1 eval, burn disc (CDR in this case), pop disc into fancy new $16 LG drive, and power-up. One quick thing to note is that the Gigabyte BIOS has virtualization set to “off” under “Advanced BIOS settings”. Best to flip that bit to “on” before installing vSphere! (although the install will work without it allowing you to turn it on later)

Rinse repeat for the second machine and we had two new working vSphere 5.1 nodes in about 30 minutes. On a side note, the Trendnet KVM worked well (although I’m not loving the white finish and the cable connection design is cluttered). With the two new hosts up and running, I decided to go ahead and upgrade the original two:

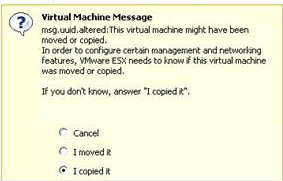

The upgrade went just as smoothly as the new install from a console perspective. You have an option to upgrade preserving VMFS, or do a new install either preserving or blowing away the existing VMFS. I went for upgrade in place in order to attempt to preserve the existing configuration. Once all hosts were up to ESXi 5.1 level, it was time to connect via vSphere and survey the damage. Since I run my vCenter (and it’s component ecosystem – AD, SQL Server, Mobile Access Gateway, etc) as a set of virtual machines on the hosts they manage, I need to connect directly to the host to start vCenter before I can use it to manage anything. I discovered that I had shifted the bulk of my guests, in particular vCenter, vCenter Mobile, SQL and AD DC1, over to host 1. If I remember right I was preparing for a migration to iSCSI and hadn’t quite gotten that working so that will be another thing to do as part of this upgrade. Bringing the first DC online I was greeted with this familiar alert:

More evidence that the iSCSI update was where I left off. I’m pretty sure I had moved the guests over so I went with that and proceeded to start up Windows. It took about 100 tries to remember what I had set the password to, but I got in and started the grueling process of bringing a Windows system that has spent half a year offline up to date! 32 updates and a reboot later and AD was back online and looking generally healthy. The second DC was in the same shape obviously, so a repeat of the entire thing was necessary once DC1 was back online. Next, both sets of VMware tools needed to be upgraded to 5.1 since the host version changed. With a healthy AD up and running and up to date, it was time to take a look at the vCenter server.

For the upgrade from 5.0 to 5.1, the following installation order is required:

- Install the new Single Sign-On Service – a required prerequisite for vCenter and, as the name implies, a centralized identity and access management facility

- Upgrade the vCenter Inventory Service – this can be done in-place

- Upgrade vCenter – this can also be done in-place

As always, there is some information/prerequisite work to do first:

- Make sure vCenter is healthy (verify database availability and integrity, make sure login works, etc)

- Have all passwords and service accounts (if applicable) handy

- Make a note of the SSO password set during the SSO install (needed during vCenter upgrade)

The vCenter upgrade will warn of any extensions which will need to be upgraded out-of-band because they are broken by the move from 5.0 to 5.1. In my case it was the vCenter Update Manager extension, the vSphere Web Client, and also the Amazon AWS VMimport add-in. Below is a screenshot walkthrough of the installation process starting with the Single Sign-On Service. The keen eyed will notice that the dialogue box title bars indicate “Simple Install”. As it turned out, that was a no go. The “Simple Install” actually cannot be used for an in-place upgrade. It hits a wall during the Inventory Service installation and fails to recognize the existing Inventory Service database. The Single Sign-On service did successfully install, however, so the screen caps below are valid:

With Single Sign-On installed and configured, it was time to update the Inventory Service. This is also a quick and easy in-place upgrade assuming everything is healthy and available with the existing version. The usual Inventory Service options can be set:

With the prerequisites out of the way vCenter is ready to be upgraded to 5.1. As with the Inventory Service the in-place upgrade works well. There is an opportunity to automatically upgrade the host agents (which I allowed the install to do) and also to set the vCenter service account (I decided to opt for Local System this time rather than a service account). In addition individual TCP access ports can be configured for the various vCenter service facilities. Keep the Inventory Service URL handy just in case the install doesn’t auto-populate it correctly:

Once vCenter has been updated it is important to remember to update any additional add-ins including the vSphere Web Client and the vCenter Update Manager. These are critical core components and the 5.0 versions do not work with vCenter 5.1 First up is the vSphere Web Client:

Some notables from the install:

- the install will locate the existing installation and indicate that it will perform an upgrade

- port assignments for the web client can be set during the install (they can be changed later of needed)

- this part is new – have the SSO information on hand as the Web Client needs to register with the SSO service

OK, time for the vCenter Update Manager upgrade:

Notables from this install:

- the 5.1 upgrade will break some things – namely VM guest OS patch baselines, host upgrade baseline and files and ESX 3.5 host patches. These items don’t apply to my install so could be safely ignored, but if they do apply, take note as some remediation could be required. You can also set VUM to immediately download updates from the standard sources after install.

- Have the vCenter creds on hand

- The default DSN for the existing VUM database should be discovered automatically. If not, have DBMS info on hand and be prepared to remediate

- Upgrading the database is not optional, but the install pauses to have the admin acknowledge that the existing database has been backed up before proceeding

- The network identification and port configuration of the server can be set. Either IP or hostname work as an identifier and, if using custom ports, be sure to make a note of them

That’s all there is as far as upgrading these two components goes. Any additional vCenter add-ons will need to be independently upgraded as well. It is important to inventory these before walking the upgrade path just in case the vendors have not yet released 5.1 compliant code. For my install I rely pretty heavily on the Amazon AWS VMimport add-in for customer testing. The current version of the connector technically only supports up to vCenter 5.0. The upgrade will leave the OVA in place, so let’s go ahead and a see if it works:

And so the screenshots above tell the sad tale… The AWS EC2 Import Connector for vCenter is incompatible with vCenter 5.1. This is confirmed by AWS support here. Hopefully this will be fixed soon as the vCenter plug-in is quite handy! In the meantime, EC2 import can still be accomplished just fine from the command line tools. I will cover the process for that in another entry, but basically it involves converting a VM to OVF (same as with the plugin) and then running the AWS command line tools from either Windows or Linux and importing the VM directly via the API.

Some notes on the screen shots above:

- After starting up the EC2 VM, it will be necessary to re-enable it from the vCenter plug-ins management section. Right click and enable and the add-on will re-enable

- Those ugly IE errors are a sure sign that IE protected mode is turned on on the machine running the vCenter machine. No need for that and it causes a lot of problems. The second to the last screenshot above is a view of how you can turn this facility off. Pretty straightforward in Windows 2008. Start->Administrative Tools->Server Manager. On the summary page you will see Security. From the Security dialogue you can select IE ES config and turn it off for both users and admins

- To verify that the connector is working you can login from the console (login: root empty password) and ping both the default gateway and also verify name resolution is working

- Connecting to the management interface for the EC2 connector with a browser (as directed by the console) will show all green “OK” if everything is working as per screenshot 7 above

- Once the add-on has been reenabled, the “Import to EC2” tab will appear on the view for any VM. Unfortunately, clicking on this tab will not invoke the EC2 options as it should, but rather will terminate with an “Authorization Failure” after a longer than usual pause.

That’s it for now, but stay tuned for the lowdown on reconfiguring vCenter, enabling EVC, migrating to iSCSI and all sorts of other interesting observations!